Project

Researching how mathematical skewing of progress bar loading affects user's perception of time

Duration

September 2019—Now

My responsibilities/role

Academic researcher

Technologies

Self-made testing website

Context

In today’s software, the usage of progress bars (of any type) is very high and

users come across them everywhere, from their personal computers to public places, like ATMs. Some

of the processes that are being visualized by the progress bars consist of multiple subprocesses, of

which some might have a variable speed that may cause decelerations or stops of the progress bar.

This work aims to broaden the research made by Harrison et al. [1] about progress bar distortions that

can reduce an overall percieved time of progress bar loading. This has been done by designing and

creating a website with questionnaire, and then conducting two separate experiments (laboratory and

public). Based on collected data, the work describes mutual relationships between different

distorting functions, and rates their strength to reduce the perceived time. In addition, a new

phenomenon of “second shown progress bar” has been discovered.

This article is a shortened version of the original work, available here.

1 Concept

Progress bars are graphical visualizations showing the progress of

currently executed processes so that the user can easily imagine the amount of work already done

and how much time should he or she expect that it will take to finish the rest. Progress bars are

widely used in entertainment as well as in professional software. Therefore, users can come into

contact with this element everywhere from computer games, mobile applications and ATMs to

software they use for their work. The problem with progress bars is, that often unexpected delays

of processes occur, which results in pausing or decelerations on progress bars. According to

Harrison et al. [1], these effects tend to make progress bar be perceived as longer then if the

progress bar was loading for the same time period but with a linear character of loading. Due to

this fact, Harrison suggested that distortions of the projected data might improve the overall

impression of the process length. Moreover, he claims that using a Peak-and-End effect [2] can

even make progress bar look faster than it is. The aim of this work is to verify some of the

questionable results of the original work by Harrison et al. and also to broaden the research by

investigating unexamined distorting functions and their mutual relations when they are used on

progress bars. Another focus of this work will be on the behavior of users facing the distortions

. To increase the relevance of results, the study will consist of two experiments performed in

two different environments. Collected data will then be also used to research how an environment,

specialization, and age can affect the final results. By studying these functions and their

properties, it will be possible to say if some functions can suppress a negative behavior of

progress bars without knowing what the behavior will be like (e.g., if it will stop, slow down or

load linearly).

The original work has a few imperfections that I do not consider as

crucial, but they might become problematic while broadening the research. Therefore, it is

important to correct those imperfections and based on these changes develop the conceptual model

of the research itself.

1.1 Problems with “Rethinking the Progress Bar” by Harrison et al.

The first one is that Harrison et al. used an unrepresentative sample of

respondents of 22 people (14 male, 8 female), all from two computer research laboratories. One

of the most important UX principles is that the research should be done on a broad amount of

people with a different personal background. The group of respondents having only IT specialists

is very narrow to claim that the conclusion the team of Chris Harrison is generally applicable.

Apart from that, the IT specialists are a very specific focus group for any kind of UX testing.

Since they use different kinds of user interfaces on daily a basis and do know lots of shortcuts

and facilitations, they are used to elements that an average user is often not to and are able to

cope with difficult situations more easily. Therefore, the chosen sample might have affected the

results, especially on such a small amount of respondents.

Another questionable factor is that users the research has been realized in

their own offices. The author has not mentioned whether the environmental conditions during the

testing were controlled or not (i.e., were there other colleagues during the testing?). If they

were, on the one hand, the moderator provided guided testing so that users could ask anytime they

did not completely understand the assignment or had any problem. Also assuming from the paper

that there were no distractions during the testing, the users were not disturbed by any other

factors from the surrounding world. On the other hand, the ecological validity could be improved

by allowing the users face everyday distortions (i.e., listening to music, people around talking)

.

During the testing, the progress bars loading took 5.5 seconds each. From

short empirical research, I conducted, I assume that such length is not sufficient enough to show

the characteristics of different distortions. That is connected to another problem that on

certain distortions, especially on the wavy ones, such a short time period has not behaved

naturally and users would not get in touch with such behavior of progress bars in real life very

often.

According to the pilot testing (see Chapter 3) of this research, some

of the users started to lose their attention even before they finished their 20th 8-second-length

comparison. Therefore, I assume letting users compare 45 different pairs of progress bars could

affect the relevance of the results.

Figure 1: Linear function

Figure 2: Power function

Figure 3: "Wave after Power" function

Figure 4: "Wave after Pause" function

Figure 5: "Pause after Power" function

1.2 Broadening the Research and Conceptual Changes

Although I assume the original work has imperfections, I do not suppose

that these imperfections could have an essential effect on the statistical preference.

Supposing that the original conclusions are correct, to see what functions

are dominant over the others, and to be able to add them to an ordered system created by Harrison

et al., new functions were created. The dominance of function means that the function can

suppress the effect of other function that makes the progress bar to be perceived as slower or

faster than it actually is. I have selected one representative from each of the hierarchy groups

[1, p. 117, Figure 3] and composed its functions together.

See the final set of functions in Figure 1 — Figure 5. The composition

order of functions was selected in the way to make their characteristics more visible.

In contrary to the original work, the domain of rating values has been

enlarged to be able to distinguish the rate how much does one function seem faster to the user

than another. The domain has been modified from original values -1, 0, 1 to new -5, -4, -3, -2,

-1, 0, 1, 2, 3, 4, 5.

To deal with the problem of an unrepresentative sample of respondents and

the environmental conditions, the changes were made in the following way. There were used two

samples of users in two different environmental conditions.

The first group has been answering the questions in controlled conditions

of the school laboratory. The answering was conducted in groups from 4 to 12 respondents,

depending on the date. Every respondent has been spaced out so that he or she did not sit next to

another respondent (to not be affected by others).

The second group was formed by public experiment and was intended to have

steadier specialization and age distribution and to be conducted at home on the users’ computers.

These factors should increase the ecological validity of the whole research by allowing me to

compare two different experiments both in controlled and uncontrolled environmental conditions.

The public link of a website with the experiment has been spread to people and shared on social

media.

To keep the user’s attention and their interest in finishing the test

(mostly for the public testing), I edited the number of questions the users had to answer.

According to the pilot testing (see Chapter 3), the users in the post-testing interview were

sceptical about the amount of questions asked and told me, that the end questions were really the

threshold to start losing attention and if they were not asked to complete the test personally,

they would have probably not finished the test. To prevent this behaviour during the public

testing, the questions were reduced to 10 per respondent in public testing and kept 20 (even

though in original research was 45) for laboratory testing.

To prevent the results from being affected by the order in which the

comparison pairs were shown, it was needed to shuffle their order. Unlike in the original work,

the concept of assigning the questions has changed to assigning the users different patterns of

comparison pairs that were shown. Every pattern consists of comparing every distortion to all

others with the exception of comparing a distortion to itself. Therefore, for 5 different

mathematical functions used in this research, every set (pattern) had 10 questions that, for

laboratory testing, were also duplicated in reverse comparison order. That was done to also keep

track of the information if an order within the comparison pair affects user’s perception as

Harrison et al. have claimed. That means laboratory patterns had 20 questions, where respondents

compared both distortion A to distortion B and distortion B to distortion A. To get answers of

the same relevance from both types of testing, the public testing (having 10 questions only) had

not only 4 patterns (as laboratory one) but 8 different patterns so that some users got to

compare distortion A to B and some compared distortion B to A. The order of comparisons within

the patterns has been pseudorandomized.

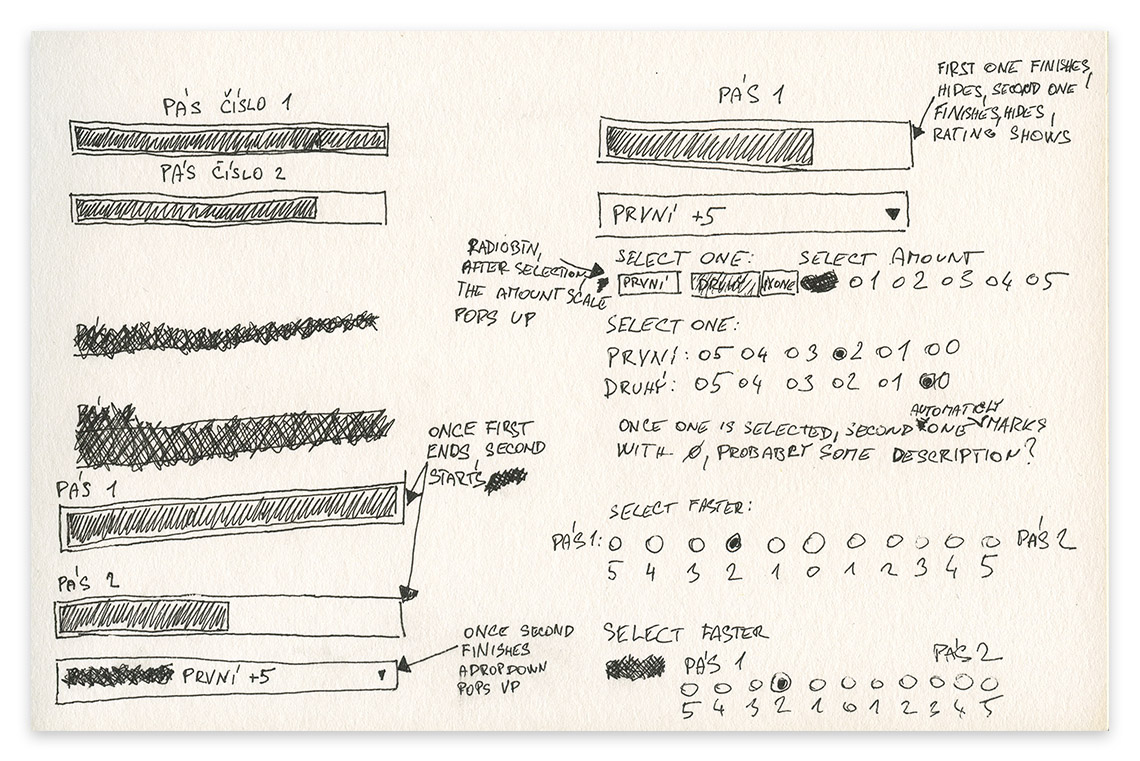

Changes were also made to the graphical user interface of the application.

The testing is web-based to allow users to complete the questionnaire from their home. At the

very beginning, users go through introduction, instructions and personal info form followed by a

preview comparison. In laboratory testing, a user also has a chance to ask the guide if any

information is not clear. During each comparison, the first progress bar shows, once it finishes

it disappears and the second one shows. After completion of the second bar, it disappears too and

shows the comparison panel. Users then choose on a scale from 5 to 0 on both sides (11 buttons in

total) which functions and how much did they perceive them to be faster. This feature lets users

express their feelings more accurately than just by preferring one or another option. The maximum

value of 5 was considered as large enough to sufficiently express the degree of affection by any

of the sides based on the amounts of options in most used questionnaires in UX studies [3].

1.3 Expectations

The main focus is to find out which functions are dominant over the others,

meaning that they can suppress the perception effect of the other function. Especially whether

Peak-and-End of a function can suppress the trends of other functions that were perceived as

slower than linear function. An expectation is that the function “Early Pause after Power” will

dominate over all other functions.

Another focus is to find out what are the partial comparisons between

functions and if there may exist a cycle in the mutual comparisons. The expectation is that the

functions created by composing two other functions together, where one of them is “Power”, will

be perceived as faster than a linear function, with exception to the “Wave after Early Pause”

that will be perceived as slowest of all the others.

The last hypothesis is that there will exist a statistical difference

between answers of people of similar demography (men vs. women, technicians vs. others,

millennials [4] vs. older users).

2 Design

After defining the concept of the research, I started to design the

interface using UX methods to create an easily usable interface without bugs and ambiguous

elements.

During the design process, I focused on creating a friendly environment

that has been clear and easily learnable to anybody. The reason for focusing on learnability is

that the application was going to be presented online without the possibility of asking the

moderator of the experiment for help. Therefore, the instructions had to be clear and

sufficiently detailed, but on the other hand, the interface had to be very clean so that the

users focused only on the task that was given at the time (reading, watching the progress bar,

etc.).

For more information about the design process of GUI (sketches, lo-fi and

hi-fi prototypes), see the full-text work.

3 Testing

The whole testing had two parts. The first part was pilot testing that was

carried out on a small sample of users with the aim of revealing the weaknesses of the

experiments. It has been conducted on four users with a laboratory version of the questionnaire.

The users had no crucial troubles with understanding the instructions during the research. During

the post-research interview, they had some comments and suggestions (see full-text

version for more information).

After finishing the pilot testing, the bugs and other weaknesses were

fixed, and both parts of the main tasting started.

The main testing consisted of two separate experiments—public testing

conducted on anonymous users of Internet and laboratory testing conducted on respondents invited

to the environment of a laboratory. The findings found in pilot testing were implemented into the

final design of the testing application. Source code of the website is available in University Archive.

3.1 Laboratory Testing

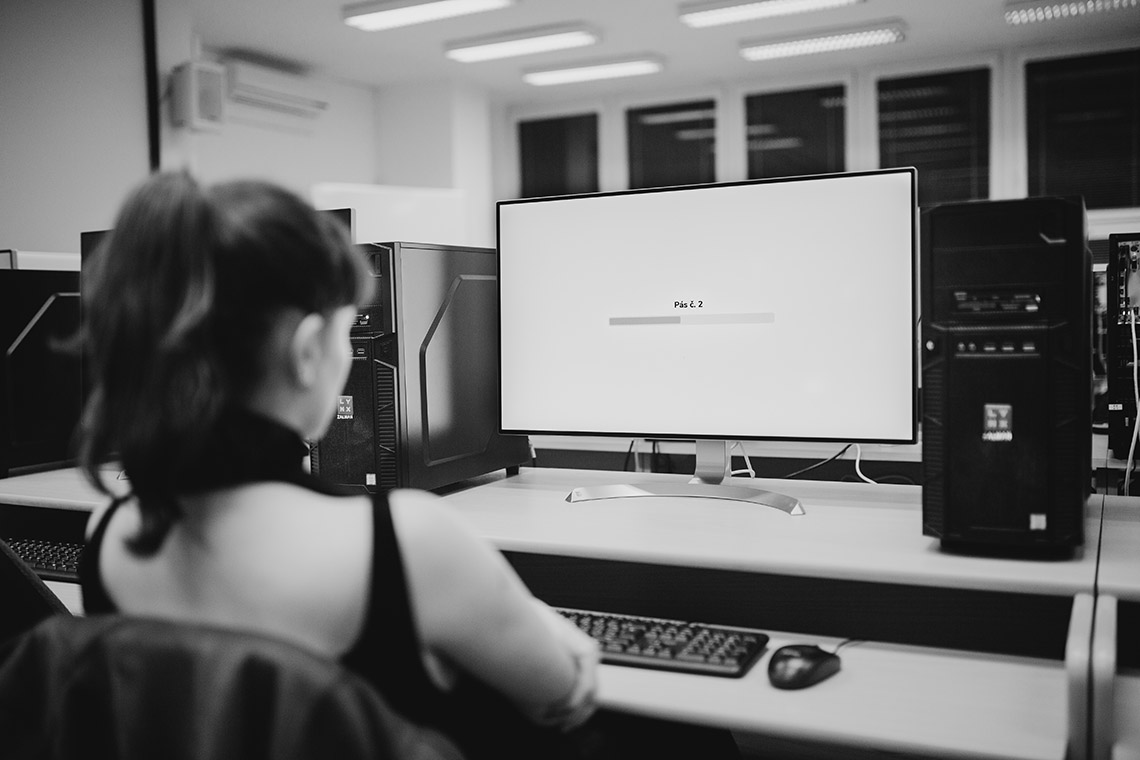

The data collection for laboratory testing was conducted in a school

laboratory within 10 groups counting from 1 to 12 respondents per group. Every respondent was

using his own computer and had at least one seat on each side free so that they were not affected

by others. In the laboratory were no significant distractions during the testing.

At the beginning of the research, the users were informed that during the

testing they are going to be comparing progress bars. They were asked to turn off their mobile

phones, to not communicate with others and once they finish their questionnaire to stay calmly in

their seat to not disturb others. The questionnaire database was then checked by the moderator

online to know when all the respondents had ended.

3.2 Public Testing

The data collection for public testing took 14 days.

A public link was sent to relatives and friends, and they were asked

to share the link with their circle of friends. Apart from sharing a link within the friends, the

link was also posted to 5 well-known Facebook groups with a request to help with a bachelor

thesis and short introduction of the thesis itself.

4 Results

4.1 Laboratory Results

To analyze the users’ preference between two functions (both when the order

of the compared function matters and when it does not) the same method as Harrison et al. has

been selected with modification that it is used on a scale from -5 to 5 (instead of -1, 0, 1).

The ratings of each function pair a user has given are summed up and later divided by the number

of the values which produces a mean value the users have given. An example might be function 1

versus function 2. Function 1 is evaluated by these values: -3, 2, 4, 0, 3, -1 (therefore

function 2 gets 3, -2, -4, 0, -3, and 1), the sum is then divided by 6 and the mean value would

be 0.83—a positive value, therefore the function 1 is preferred. This method takes into account

that numerous low negative values in combination with a few large positive values may produce

mean of 0.

To investigate the general preferences of participants, I tested suggested

differences in preferences with the use of inferential statistics to support my expectation about

the general superiority of the suggested function “Early Pause after Power”. The data were

normally distributed, so I used parametric tests for the deeper analyses.

4.2 General Evaluation of Laboratory Testing

I used one-way ANOVA (analysis of variance) to indicate the suggested

effect in reported preferences of participants. In the controlled laboratory conditions 46 people

were tested, of which 33 were male and 13 female. They were aged from 19 to 26 (m = 21.93, med =

22, sd = 1.531), both from technical (34) and other (12) fields.

ANOVA indicated a significant effect between functions (F = 28.478, df = 4,

p < 0.001).

The analysis of the values calculated with respect to the order of

functions within the comparison couple has shown that users tend to prefer the first function

they have seen. Users have selected the first function in 48% of all answers, 31% the second one

and 21% did not prefer any. Especially in comparisons of “Linear” with “Early Pause

after Wave”, and “Wave after Power” with “Power” the trend of dominance of one of the functions

is very well visible.

The overall values with no respect to the order have proven the hypothesis

that the “Early Pause after Power” function is the most dominant of them all. The second strongest

(fastest perceived) one is “Power” function, then “Wave after Power”, “Linear” and the last is

“Wave after Early Pause”.

Figure 6: Mean Values in Laboratory Testing

Values with a significant difference from comparisons without respect to

the mutual order have been then used to form a topological order of the functions. The final

order of functions is shown in Figure 7—the leftmost function was perceived as the slowest,

solid line represents a significantly different relation, dashed represents relation that was not

significantly different.

Figure 7: Topological Order of Functions in Laboratory Testing

4.3 Public Results

Similarly to the laboratory testing the mean method (see Chapter 3.1) has

been chosen. Public testing has been conducted on anonymous respondents using an online

questionnaire.

To investigate the general preferences of participants, the inferential

statistics have been used again to support the expectations. The results were normally

distributed again; therefore parametric tests were used for the deeper analysis.

4.4 General Evaluation of Public Testing

Similarly to the laboratory condition test, I used one-way ANOVA to

indicate suggested effect regarding reported preferences of general public users. During 14 days

of testing in the public environment, 192 people conducted the whole questionnaire (answers of

the people, who did not complete the whole questionnaire, were eliminated). They were aged from

16 to 68 (m = 27.545, med = 23, sd = 10.598), both from technical (84) and other (108) fields.

ANOVA indicated a significant effect between functions (F = 53.827, df = 4,

p < 0.001), similar as in the laboratory testing.

The phenomenon of users selecting the first function more often has

occurred during public experiment again. However, the percentual difference is

smaller than in the laboratory experiment (the first function has been selected in 41% of cases,

second one 35% and in 24% was selected zero).

There was no effect of visible dominance of the first seen function

calculated via mean values during the public experiment. In the

contrary, there has been a new behaviour of preferring the second function during comparison of

“Linear” function versus “Wave after Power” function.

While not calculating with respect to the order of functions within the

comparison couple, the hypothesis of “Early Pause after Power” function being the fastest has been

proven again. Other functions had the same order within each other too, the only difference was

their mutual mean values and significance.

Figure 8: Mean Values in Public Testing

Similarly to the laboratory experiment, values with a significant

difference from comparisons without respect to the mutual order have been then used to form a

topological order of the functions. The final order of functions is shown in Figure 9—the

leftmost function was perceived as the slowest, solid line represents a significantly different

relation, dashed represents relation that was not significantly different.

Figure 9: Topological Order of Functions in Public Testing

5 Discussion

Thanks to comparison of new functions with two of the original ones, it is

now possible to place the new functions on a number line created by Harrison et al. [1, p. 117,

Figure 4]. Even though it is not possible to classify the new functions with an exact number, the

topological order of functions gives us an idea of how they would fit into the original order

(Figure 10). Original [1] (green points), edited (pink points representing the investigated

functions). Bottom branches represent indefinite mutual order within the functions on the same

interval of the axis.

Figure 10: Order of Original Functions and New Ones

The analysis comparing laboratory testing with the public one has not

uncovered any significant differences between results collected from users in these two different

environments. The only difference between these two experiments was visible during the analysis

of relative values with respect to the mutual order, specifically the comparison of “Linear” and

“Wave after Power” functions. In the laboratory experiment, the testing in both orders has

resulted in favour of “Wave after Power” function.

5.1 Second Shown Progress Bar Phenomenon

Compared to that, in public testing, the results were indecisive. Both

functions were once preferred over the other, but surprisingly, only if they were shown as second

compared function. This phenomenon has not been spotted or described yet neither by Harrison et

al. and would be interesting to study it deeper. Considering the resulting values of a laboratory

experiment were both very close to zero, I do not assume that the phenomenon of the second

function shown was a statistical exception.

5.2 Effects of Distortions

The final order of tested functions says that the “Power” function in

combination with any other has an effect of making the progress bars be perceived as faster than

if the data were projected linearly. Therefore, it is possible to say that the “Power” function

has a dominance no matter what is the second function that it is in combination with. Whether it

is a function with characteristics of making the progress bar look slower or faster. In both

situations, the Peak-and-End effect dominates and improves (makes faster) the final overall

impression.

This fact enlarges the field of use of distortions in practical use. On one

hand, as Harrison et al. have already stated, the progress bars with progress corresponding to

the approximated state are sufficient to visualize the ongoing process. On the other hand, thanks

to the discovered facts, it is possible to use a distortion on any progress bar without knowing

its future behaviour (no matter if it will stop for a while, periodically slow down or have some

other combination of behaviours). In the commercial sphere, this feature could be well-suited to

enhance user experience.

Conclusion

The goal of this work was to broaden and confirm the relevance of existing

research on distortions used to manipulate users’ perception of progress bars made by Harrison et

al. [1].

Experiments comparing both new and original functions showed that the

Peak-and-End phenomenon has a large positive effect on the perception of a user. Moreover, it can

inhibit negative effects like periodical slowing down or pausing. Also, a hypothesis that the

“Power” function is better perceived then the “Linear” one has been confirmed. All these results

are applicable in the software development to improve the user experience, especially by

shortening the perceived progress bar duration during their unexpected behaviours.

The work has also explored a new phenomenon of “second shown progress bar”

that, in some cases, contradicts the principles that resulted from the original work by Harrison

et al.

The collected data also served as a source for various exploratory analyses

. These analyses were carried out on the basis of demographical differences (gender,

specialization, age) and showed differences between genders, and also between millennials and

older respondents—females and older users tend to use a larger domain of answers (for more information see full-text work).

Future Work

Findings of this work opened various topics to be discussed in the future.

The discovery of the “second shown progress bar” phenomenon established a new field that could be

investigated. Usage of different functions with similar trends as “Linear” function and “Wave

after Power” function during the comparisons might reveal new findings, that would help to

describe it. Also, some new behaviours might occur if the strength of a “Power” function is

greater (for example as the “Fast Power” function in the original work [1]).

Usage of two different environmental conditions showed that both

experiments have similar results. Therefore, for future work, it would be sufficient to research

only in one of these environments.

Also, it would be interesting to investigate longer progress bar durations

that might show new phenomena which would enlarge the usage of distortions for improving user

experience again.

Because females behaved differently during both experiments (see the original work), another research could be focused on this topic.

A big contribution for future research might also be a possibility of usage

of the web application implemented for this work (available in University Archive). It is easily adaptable

to many kinds of other experiments focused on progress bar distortions.

References

[1] HARRISON, Chris; AMENTO, Brian; KUZNETSOV, Stacey; BELL,Robert.

Rethinking the Progress Bar. In: Proceedings of the 20th An-nual ACM Symposium on User Interface

Software and Technology. New-port, Rhode Island, USA: ACM, 2007, pp. 115–118. UIST ’07. ISBN 978-

1-59593-679-0. Available from DOI: 10.1145/1294211.1294231.17

[2] LANGER, Thomas; SARIN, Rakesh; WEBER, Martin. The retrospective evaluation of payment sequences:

duration neglect and peak-and-end effects. Journal of Economic Behavior Organization. 2005, vol.

58,no. 1, pp. 157–175. ISSN 0167-2681. Available from DOI: https://doi.org/10.1016/j. jebo.2004

.01.001.17

[3] HARTSON, Rex; PYLA, Pardha. The UX Book: Process and Guidelines for Ensuring a Quality User

Experience. 1st. San Francisco, CA, USA: Morgan Kaufmann Publishers Inc., 2012. ISBN 0123852412,

9780123852410.

[4] “Millenials”. https://en.wikipedia.org/wiki/Millennials. Retrieved 2018-12-9.

Full-text work available here.